What would it take to make an app that would work like Uber, but without the corporate entity? And without the high percentage that goes to Uber. Hum… Let’s take some of the needed parts…

- Hailing a driver: a rider wants to know the driver is safe, closest, and the price.

- On the safety issue, let’s suppose a really good reputation system (see below).

- “Closest” can be done by integrating a good map app (see below).

- And a price– this could be as simple as a set function of per-ride, per mile, and per minute.

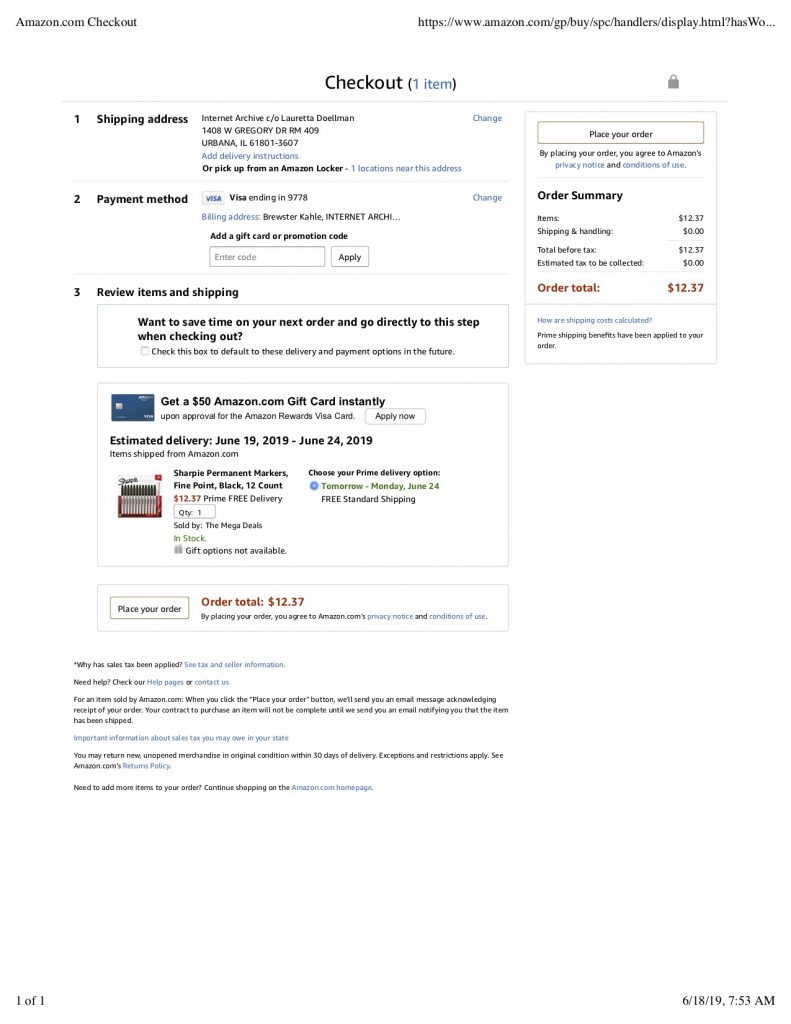

- Paying a driver: could be as easy as crypto/bitcoin being paid part as the ride starts and the rest as it ends. But there could be more complicated parts of this where there is a 3rd party that takes a bit, that may pay the app developer (see below). If there is a 3rd party, don’t we have Uber? Maybe, but could be much lighter weight. There are also contracts in ethereum that could help with arbitration in the case of disputes.

- A reputation system: Seems we need a strong reputation system. Yelp, it has been said, has been corrupted, and it is difficult to avoid this. Reddit? Slashdot? Maybe a mixture of up-vote/down-votes from anyone, and mixing in social network to favor your friends, or maybe subscribing to aggregators of reputations…

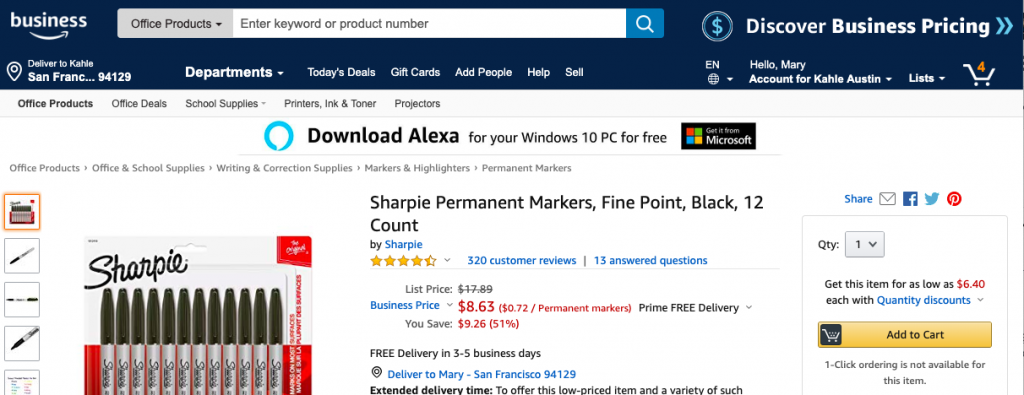

- Map App: Google has nailed this, but I bet a rideshare app puts lots of load on it. We might need a per-ride payment to go to the map provider.

- Price and payment: could be one price per ride, and this has advantages. What if a rider could pre-tip, or a driver could pre-discount? That might help those that do not have good reputations yet. Does a rider pick between drivers, and vice versa? Since bitcoin is not widely used yet… how about google/apple pay? Venmo? Paypal? Some of these have evolved pretty anonymous payments.

- Evolving the app: what if there were many apps competing for the riders and drivers– what if it evolved into a system where the apps were somewhat compatible so a driver could run many apps at once and they would compete for her business. Then the apps would need reputations as well, and they could evolve different algorithms. Does the app provider get a piece of the action?

If this could work, it could mean rapidly evolving ecosystem with many players at every level.

It could go very wrong– a great movie that shows this is Nerve (worth watching). It could be used for escort services and other match-making functions.

Anyway, I like the mental excersize of ______ but decentralized, where _____ is your favorite cool thing. Google Docs, Tesla, Slack, WordPress, Internet Archive…

(and has this already been done?)